by Jenny

on March 02, 2017

On hype driven machine learning

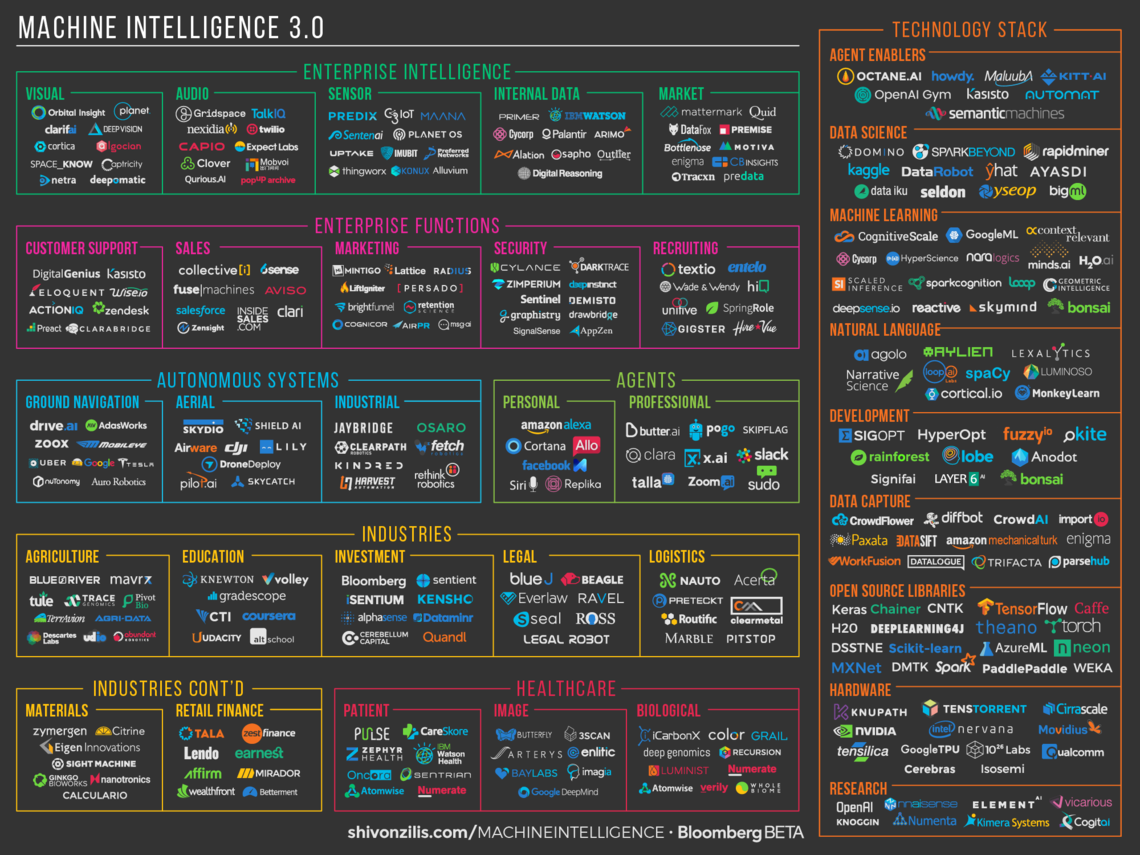

This is the competitive landscape for machine learning

This is the competitive landscape for machine learning

Countless posts have been written lately on who and what you need to follow in order to navigate this landscape, and rightly so. It’s already enormous, and with any industry that’s just awakening, the boundaries are not yet clear and everything is really up in the air.

In short, if you were to want to build a chat application for your business or for fun, and looked at this chart, you would be confused on where to start.

And no wonder: the latest buzzwords are AI and machine learning, startups all across the globe are getting in on the game, plenty who are just tacking the words on in hopes of quick funding – not without merit, as we’ve seen that investors are swarming in and some big exits have already been done. It’s a gold rush.

And it’s hectic.

Now, coming down to the fact of the matter, for a working conversational agent (or chat interface, or bot, or human-like automation) you need three things:

– A good language model

– Data to train the agent on

– Connector functions, eg. what systems (chat and otherwise) the agent will interact with.

Let’s focus on the first two, as the third one is more or less covered by the market adequately at this point.

The talk of the town is Neural Networks – and rightly so. The technology which has been around since the ’70s is now very accessible. Computing power is now cheap enough to build large neural nets, and companies are fitting this need very well. Nvidia’s pivot comes to mind as one of the most successful ones.

Are Neural Networks the right solution for customer service improvement?

However, our previous experience shows that “purely Neural Network” approach, which analyses only the usually scarce amount of sentences produced by even big corporations (counting far below the billion of words), leads to extremely poor results.

Neural networks require a lot of representative data, something that most companies don’t have lying around. You can use generative neural nets to create simple question – answering bots, but the more specific the task becomes, the more this approach falls apart. Sure, anybody can download a big data set and train a TensorFlow model following a recipe to answer questions, but such a thing is useless if you want to have your bot to take specific actions – making a payment, placing an order, looking up customer info, etc.

To make a paragon in real life, it is like asking a child to learn Finnish and how to answer to customer service questions after just having listened to the few thousands of sentences in a customer service log –sentences which are most of times relatively similar. Computers are good at analyzing huge amount of data (in the exabyte range), and the size of logs recording past conversations in customer service are usually a factor one million below that.

Like all hard problems, it comes down not to the tools you use, but how you approach the problem. We believe that it pays off not to do hype driven development, but to go with what works. For a multilingual environment with limited amount of data available, you need to use multiple different tools and not just follow the trend, and stick with what works.

We are confident that our conclusions are correct, based on years of experience at the forefront of machine learning research:

Mario Alemi was associate scientist at CERN developing algorithm for LHCb for five years, professor of Data Analysis for Physics in Milan and Uninsubria, Italy and professor of Mathematics at École Supérieure de la Chambre du Commerce et de l’Industrie de Paris. At the moment he is scientific coordinator for the Master in Data Analysis at the Italian TAG Innovation School.

Mario has more than 50 peer-reviewed scientific publications, most of them on statistical techniques for data modelling and analysis, with more than 4,000 citations.

Mario has also been responsible for the AI development at Your.MD, praised by various publications, included the Economist, as one of the most advanced AI-based symptom checker available today.

Angelo Leto has many years of experience in software engineering, implementing machine learning algorithms for NLP at CELI, medical imaging, and other data-driven contexts. He has also worked at the Abdus Salam International Center for Theoretical Physics and the International School for Advanced Studies of Trieste implementing data processing infrastructure and optimization and porting of scientific applications to distributed environment. Recently he gave a lecture about parallel computation with apache spark at the Master in High-Performance Computing.

GetJenny was selected to be a part of Nvidia’s Inception program. The inception program discloses to us the access to a great network of cutting-edge expertise and exclusive learning resources as well as remote access to state-of-the-art technology.

If you would like to learn more about chatbots please read our guide on chatbots.

[Original hero image from the amazing Shivon Zilis]

Similar articles

16 Real-Life Examples of Energy Companies Utilizing Industry Trends

How European Energy, Gas and Utility companies are utilizing industry trends to grow business in times of uncertainty? Let's take a look at the...

Humans and AI: The Ultimate Dream Team

A fresh data-driven overview on how AI augments the lives of humans from customer service and HR to the pharmaceutical industry.

Ethics of AI: Preserving and growing human purpose

As artificial intelligence and machine learning become a greater part of our daily lives, it’s becoming more and more important to consider the...